Formal Software Design

Alloy is an open-source language and analyzer for software modeling. An Alloy model is a collection of constraints that describe a set of structures, e.g. all possible security configurations of a web application. Alloy’s tool, the Alloy Analyzer is a solver that takes the constraints of a model and finds structures that satisfy them.

The Alloy Analyzer leverages a SAT solver, and this precludes Alloy from analyzing optimization problems. propose AlloyMax, an extension of Alloy that can analyze problems with optimal solutions, soft constraints and priorities. AlloyMax adds new language constructs for specifying optimization problems, and uses an analysis engine that leverages a Maximum Satisfiability (MaxSAT) solver. also provide a translation mechanism from a first-order relational logic into a weighted conjunctive normal form (WCNF) that the MaxSAT solver expects.

To understand what is first-order relational logic, and how it differs from other kinds of logic, A relational logic primer — Formal Software Design with Alloy 6 seems like a good start.

Should have paid more attention in ELE 206: Introduction to Logic Design .

Dynamic Program Analysis

Dynamic program analysis is a technique for monitoring, understanding, and potentially intervening in program behavior during its execution . Types of dynamic program analysis include code coverage, memory error detection, fault localization, invariant inference, concurrency errors, program slicing, and performance analysis. Most techniques involve code instrumentation, which incurs runtime overhead.

propose module recontextualization, a dynamic program analysis approach for dynamic languages . Dynamic languages feature a module-import mechanism that loads the code at runtime as a string. The approach uses lightweight transformations on the module’s string representation to insert developer-provided, analysis-specific code into the module before it is loaded. This allows the developer to capture all interactions around the module in unmodified production language runtime environments. Analysis via module recontextualization is coarse (module-level vs. instruction-level), but has low runtime overhead (\(\approx 4\%\)). The authors further provide an implementation for JavaScript and Racket ecosystems .

Probabilistic Programming

propose loop perforation, a general technique to trade accuracy for performance by transforming loops to execute a subset of their iterations. They were able to make programs twice as fast, while changing the result by less than \(10\%\).

for (i = 0; i < N; i++) { ... } // Original loop

d = 1 / ( 1 - r); // r = perforation rate, in the range [0, 1)

for (i = 0; i < N; i += d) { ... } // Loop after an interleaving perforation

proceed in two phases. The criticality testing phase finds the set of tunable loops \(P\) in an application \(A\), given a set of representative inputs \(T\), a set of perforation rates \(R\), and an accuracy bound \(b\). The second phase finds a subset \(S \subseteq P\) in \(A\), given \(T\) and \(b\).

For a loop \(l_i\) to be in \(P\), it must speed up the program, incur an accuracy loss that is less than \(b\), and not cause memory errors in \(A\). The memory errors are found running \(A\) under Valgrind.

Applications that are amenable to loop perforation tend to have these computation patterns:

- Iterating over a search space of items. Perforation skips some of the items.

- Using a search metric to drive a search for the most desirable item. Perforation produces a less accurate but efficient metric.

- Performing Monte-Carlo simulation. Perforation evaluates fewer samples.

- Repeatedly improving on an approximate result to obtain a more accurate result. Perforation uses fewer improvement steps.

- Traversing a data structure and updating elements with computed values. Perforation skips some of the elements.

Version Control Systems (VCS)

VCS track line-level changes, which lose historical context after

transformations like moves/renames across the file system, and groups of lines

being moved within files. Furthermore, developers are usually interested in a

slice of the history, and not the history for the whole file. propose CodeShovel, a tool for building method histories on demand that

outperforms tools from research (e.g. FinerGit which needs pre-processing), and

practice (e.g. IntelliJ’s git history, git log -L). Field study with 16

industrial devs confirmed CodeShovel’s correctness, low runtime overhead, and

versatility.

have a public website that demonstrates CodeShovel, given the URL of a git repo. Tried it out to see if it would catch this commit from a past project of mine: Refactor: Move non-flask related actions from user.py to user_model.py · dchege711/cos333_tiger_leagues@0410b56 . I’m impressed!

. Credits: https://se.cs.ubc.ca/CodeShovel/.](/img/computer-science/code-shovel-sample.jpg)

I didn’t know about , which has two forms:

-L<start>,<end>:<file> and -L:<funcname>:<file>, where start, end and

funcname can be regex. If end is a regex, then the search starts at the line

given by start.

For example, git log -L '/int main/',/^}/:main.c shows how the function

main() in the file main.c evolved over time. The challenge for the dev is

constructing appropriate markers for start and end; it helps if the file is

well-formatted (e.g. indentation).

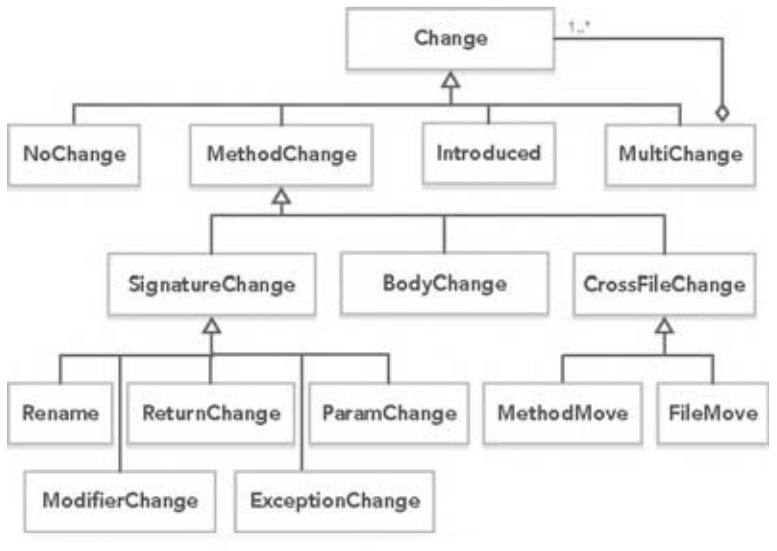

’s CodeShovel starts out with a repo, starting SHA, and current location of the method. It uses a language-specific parser to generate an Abstract Syntax Tree (AST) of all methods in the files analyzed. For a given commit, CodeShovel stores the current file path (\(\textit{path}\)), line number (\(\textit{num}\)), method signature (\(\textit{sig}\)), and method body (\(\textit{body}\)) for the specified method. It then works backwards to the preceding commit that modified the \(\textit{path}\) containing the method. The method matching algorithm is as follows:

// Inputs

// sig: method signature

// body: method body text

// path: path to file containing method

// files: list of all files changed in the commit

// Phase 1: Find unchanged method within the same file

FOREACH meth in files[path]

IF sim(meth["sig"], sig) == 1.0 && sim(meth["body"], body) == 1.0

return NO_CHANGE

// Phase 2: Find modified method within the same file

FOREACH meth in files[path]

IF sim(meth["body"], body) >= 0.75

return meth // Method found in file

// Phase 3: Find method within renamed or moved file

FOREACH file in files

FOREACH meth in file

IF sim(meth["sig"], sig) == 1.0 && sim(meth["body"], body) >= 0.5

return meth // Method found in moved file

// Phase 4: Find method modified from different file

methods = all methods in all files

// Sort methods by decreasing body similarity

methods = sort(methods, sim(entry["body"], body))

// Find highest matching method

FOREACH meth in methods

IF isShort(meth) && sim(meth["body"], body) >= 0.95 // < 20 chars = short

return meth

ELSE IF sim(meth["body"], body) >= 0.82

return meth

// No match, last commit introduced the method

return null

… where the thresholds were data-informed.

To add support for a new language, CodeShovel defines two interfaces, Parser

and Method. The rest of the code is language agnostic.

codeshovel/src/main/java/com/felixgrund/codeshovel/parser/impl at master ·

ataraxie/codeshovel

currently has implementations for Java, Python and TypeScript.

Workplace Dynamics

investigate how the mass shift to working from home (WFH) during the COVID-19 pandemic affected dev teams (n = 608). 30% thought the ability of the team to reach milestones changed: reduced productivity (general, child care), communication & collaboration challenges. 499 said their team culture changed: emphasized social interaction, more meetings, missing interactions, and increased empathy. On how teams have supported members during WFH: peer support, virtual social meetings, instant message apps, understanding personal WFH situations, and personal check-ins. On team communication and collaboration, there was a marked decrease in feeling socially connected (65%!), ability to brainstorm, communication ease, and knowledge flow. On the other hand, there was a marked increase in frequency of meetings, and team member notifications.

Glossary

- Dynamic Programming Language

- A high-level programming language, which at runtime executes many common programming behaviors that static programming languages perform during compilation, e.g. adding new code, extending objects and definitions, modifying the type system, etc. Examples: JavaScript, Python, Ruby, PHP, Perl, Common Lisp, R, Objective-C, etc..

References

- AlloyMax: bringing maximum satisfaction to relational specifications. Zhang, Changjian; Ryan Wagner; Pedro Orvalho; David Garlan; Vasco Manquinho; Ruben Martins; Eunsuk Kang. European Software Engineering Conference and Symposium on the Foundations of Software Engineering, Proceedings of the 29th ACM Joint Meeting, Aug 2021, pp. 155-167. Carnegie Mellon University; Universidade de Lisboa. doi.org . scholar.google.com . Cited 0 times as of Jan 24, 2022.

- Boolean satisfiability problem - Wikipedia. en.wikipedia.org . Accessed Jan 22, 2022.

- About Alloy. alloytools.org . Accessed Jan 22, 2022.

- Efficient Module-level Dynamic Analysis for Dynamic Languages with Module Recontextualization. Nikos Vasilakis; Grigoris Ntousakis; Veit Heller; Martin C. Rinard. European Software Engineering Conference and Symposium on the Foundations of Software Engineering, Proceedings of the 29th ACM Joint Meeting, Aug 2021, pp. 1202–1213. Massachusetts Institute of Technology; Technical University of Crete; Unaffiliated. doi.org . scholar.google.com . Cited 2 times as of Jan 24, 2022.

- Dynamic program analysis - Wikipedia. en.wikipedia.org . Accessed Jan 24, 2022.

- Dynamic programming language - Wikipedia. en.wikipedia.org . Accessed Jan 24, 2022.

- Managing Performance vs. Accuracy Trade-offs with Loop Perforation. Stelios Sidiroglou-Douskos; Sasa Misailovic; Henry Hoffmann; Martin Rinard. ACM SIGSOFT Symposium, 19th; European Conference on Foundations of Software Engineering, 13th, September 2011, Pages 124–134. Massachusetts Institute of Technology. doi.org . scholar.google.com . Cited 585 times as of Jan 30, 2022.

- CodeShovel: Constructing Method-Level Source Code Histories. Grund, Felix; Shaiful Chowdhury; Nick C. Bradley; Braxton Hall; Reid Holmes. International Conference on Software Engineering, 43rd, 2021, pp. 1510-1522. University of British Columbia. doi.org . scholar.google.com . github.com . Cited 4 times as of Feb 6, 2022.

- Git - git-log Documentation. git-scm.com . Accessed Feb 6, 2022.

- 'How Was Your Weekend?' Software Development Teams Working From Home During COVID-19. Miller, Courtney; Paige Rodeghero; Margaret-Anne Storey; Denae Ford; Thomas Zimmermann. International Conference on Software Engineering, 43rd, 2021, pp. 624-636. New College of Florida; Clemson University; University of Victoria; Microsoft Research. Cited 23 times as of Feb 16, 2022.

The Boolean Satisfiability Problem (SAT) asks whether the variables of a given Boolean formula can be satisfied (i.e. consistently replaced by the values

TRUEorFALSEin such a way that the formula evaluates toTRUE). SAT was the first problem that was proven to be NP-complete. Nonetheless, there are heuristic SAT-algorithms that solve problems involving tens of thousands of variables and formulas having millions of symbols. This is typically good enough for practical SAT problems.MaxSAT is an extension of the SAT problem that asks for the maximum number of clauses which can be satisfied by any assignment. It also has efficient approximation algorithms, but is NP-hard to solve exactly. Worse still, it is APX-complete, i.e. no polynomial-time approximation scheme unless \(P = NP\).